Einar

Einar is a Group project, developed by a team of students over 1 year. It is a single-player 3rd person hack and slash based on Norse mythology with AAA-quality graphics and gameplay. The player takes on the role of Einar, who is on a quest to kill the inhabitants of a Norse fishing village who are infected by a mysterious material. Use different weapons such as the bow, hammer and axe to clear the village of the monsters.

Project details |

Technical details |

|

|

Role |

Awards / recognition |

|

|

Media |

|

|

|

What I worked on

Custom Movement

The character movement component of unreal lacked a few features, to get these features i made a movement component that would help us achieving the following behaviors:

| Smooth rotation on turning, Acceleration and deceleration over path distance. | Correcting RVO behavior that would push the actor away from collision and not the change the rotation. | The result applied to the ingame AI with a group |

|

|

|

|

Wander & Patrol

The AI spawns inside the game and when not in combat it will go in an idle state and start wandering or patrolling. Because of my implemented movement behvaior i could simply set an destination and the ai would turn and rotate smoothly.

Wandering

The AI spawns and should wander within its specified combat area. To Accomplish this i made special walk volumes, multiple volumes can be placed and assigned to the AI. When wandering, The AI would certain intervals request an wander spot of one of the volumes at and use this as his target.

The volume would pick an random spot inside his volume and does a check if it is reachable on the mesh, if not it would retry a new position. This is to prevent the AI bumping into objects because their target is inside.

Patrol

The AI was also able to patrol along a spline path, The next node is triggered when the AI hits a certain radius from the point what would make the path less static and allows small diviations when going around objects.

| Wander Area’s | AI patrolling |

|

|

|

Detect and investigate

I provided the AI with an sense system. It would record the following actions with an age variable. This would allow me to have the AI react more natural and make data driven decisions.

Sight

The AI will register the player when he/her is within a vision cone of the AI and start attacking. If the player left the field of vision the AI will move to the last known player location to investigate.

Vision is also used for pathing nodes. Instead of relying directly on pathfinding I store the player’s location at certain intervals to make the AI chase the player without cutting corners and it appearing in front of it.

Sound

The player emits a sound that is scaled with his/her movement. This can be picked up by the AI. The AI can also register sound produced by other AI. The AI will move towards the sound location for investigation.

Damage received

The player can shoot or hit the player with its weapon. When the AI is hit it will rotate towards the direction of impact. If it has not yet detected the player it will move in the direction where the hit came from and look around.

Impact

With the system stealth could be an option and as such I added impact as a sense as well. When the player would walk against the AI it would feel it and turn towards the impact location and see the player.

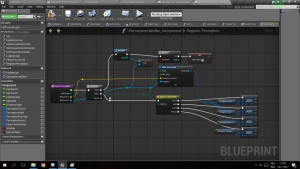

Ranged AI

The ranged AI is 1 of the 3 AI present in the game. It is an AI that behaves different than the other 2. Where the others make full use of an overseer system, where the system directs their every move it does not for this. This AI stands alone and uses the Overseer to know when he can throw an projectile to the player.

When in combat the AI has 2 stages. Close and Range

Ranged attack

If the AI is allowed to attack the player it will use the EQS system to get a position that has an line of sight to the player and throw an projectile. Using projectile motion I calculate the players future position and let the AI aim for this. The projectile is fired using a hand socket location as start location and a animation key frame event to call the function.

This Attack has additional tweaks as:

- Min/Max range restriction. There is a limit to how far the AI can throw.

- Miss chance over distance, the farther away the player the more likely the AI can miss.

When the AI is not allowed to attack it will taunt to the player and/or move around.

Close attack

When the player comes to close the AI is able to do an close attack where it would emit projectiles from its mouth until the attack ends. Using A mouth socket I calculate the initial velocity of the projectile using the forward vector with a cone offset. This ability has an cooldown and when on cooldown will the AI try to flee from the player using the EQS system to get a position away from the player.

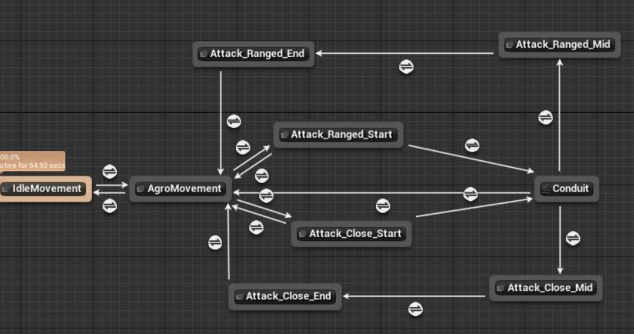

Blending Attacks

Taking inspiration of the observer pattern I setup events between the Animation system and Behavior tree, the events with be instantiated or a call is broadcasted. This allowed me to early out of behaviors, switch between them and blend this into the animation system without canceling animations.

I cut up parts of the animation into decision and attack clips. During decision clips (attack start) the player could move out of range or to close. Depending on the player position I could blend the animation into a short range attack or stop without having to cancel the animation first and start a new one. If the animation had already transitioned into attack it could no longer change.

| The attack start clips are: “Decision“ clips. During these clips the behavior tree (BT) is allowed to switch behavior. The conduit branches depending on the BT result and the animation will blend accordingly. | The result ingame |

|

|

Puddles

The AI’s attacks create AOE puddles on the ground that damage and slow the player. I added a cooldown timer in the function which applied the damage to make sure that the AOE was consistent even when puddles are overlapping.

The puddles consisted of a decal texture to make it wrap around terrain and a sphere collision to apply the damage.

projectile

Used by both attacks the projectile has a sphere collision for hit detection and on hit it spawns the puddle and emits a growing sphere for splash damage